An often overlooked and misunderstood concept in application development is the one involving secure hashing of passwords. We have evolved from plain text password storage, to hashing a password, to appending salts and now even this is not considered adequate anymore. In this post I will discuss what hashing is, what salts and peppers are and which algorithms are to be used and which are to be avoided.

Hashing

Hashing is a type of algorithm which takes any size of data and turns it into a fixed-length of data. This is often used to ease the retrieval of data as you can shorten large amounts of data to a shorter string (which is easier to compare). For instance let’s say you have a DNA sample of a person, this would consist of a large amount of data (about 2.2 – 3.5 MB), and you would like to find out to who this DNA sample belongs to. You could take all samples and compare 2.2 MB of data to all DNA samples in the database, but comparing 2.2 MB against 2.2 MB of data cant take quite a while, especially when you need to traverse thousands of samples. This is where hashing can come in handy, instead of comparing the data, you calculate the hash of this data (in reality, several hashes will be calculated for the different locations on the chromosomes, but for the sake of the example let’s assume it’s one hash), which will return a fixed length value of, for instance, 128 bits. It will be easier and faster to query a database for 128-bits than for 2.2 MB of data.

The main difference between hashing and encryption is that a hash is not reversible. When we are talking about cryptographic hash functions, we are referring to hash functions which have these properties:

- It is easy to compute the hash value for any given message.

- It is infeasible to generate a message that has a given hash.

- It is infeasible to modify a message without changing the hash.

- It is infeasible to find two different messages with the same hash.

The hash function should be resistant against these properties:

- Collisions (two different messages generating the same hash)

- Pre-image resistance: Given a hash h it should be difficult to find any message m such that h = hash(m).

- Resistance to second-preimages: given m, it is infeasible to find m’ distinct from m and such that MD-5(m) = MD-5(m’).

Modern Hashing Algorithms

Some hashing algorithms you may encounter are:

- MD-5

- SHA-1

- SHA-2

- SHA-3

MD-5

MD-5 is a hashing algorithm which is still widely used but cryptographically flawed as it’s prone to collisions. MD-5 is broken in regard to collisions, but not in regard of preimages or second-preimages. The first attacks on MD-5 were published in 1996, this was in fact an attack on the compression of MD-5 rather than MD-5 itself. In 2004 a theoretical attack was produced which allowed for weakening the pre-image resistance property of MD-5. In practice the attack is way too slow to be useful.

SHA

SHA or Secure Hashing Algorithm is a family of cryptographic hash functions published by the National Institute of Standards and Technology (NIST) as a U.S. Federal Information Processing Standard (FIPS). Currently three algorithms are defined:

- SHA-1: A 160-bit hash function which resembles the earlier MD-5 algorithm. This was designed by the National Security Agency (NSA) to be part of the Digital Signature Algorithm. Cryptographic weaknesses were discovered in SHA-1, and the standard was no longer approved for most cryptographic uses after 2010.

- SHA-2: A family of two similar hash functions, with different block sizes, known as SHA-256 and SHA-512. They differ in the word size; SHA-256 uses 32-bit words where SHA-512 uses 64-bit words. There are also truncated versions of each standardized, known as SHA-224 and SHA-384. These were also designed by the NSA.

- SHA-3: SHA-3 is not yet defined. NIST is working on the exact parameters they will use; SHA-3 will be Keccak, or “close enough”, but not necessarily the Keccak which was submitted (it is a configurable function, and they seem to want to tweak the parameters a bit differently than what was first proposed).

Note that while SHA-1 is “cryptographically broken” the properties we seek in a password hashing algorithm are still valid. In the real world finding a password hashing algorithm built on SHA-1 is still secure in the sense, that if it’s implemented there is no reason to assume it should be immediately changed to something newer.

Strong passwords

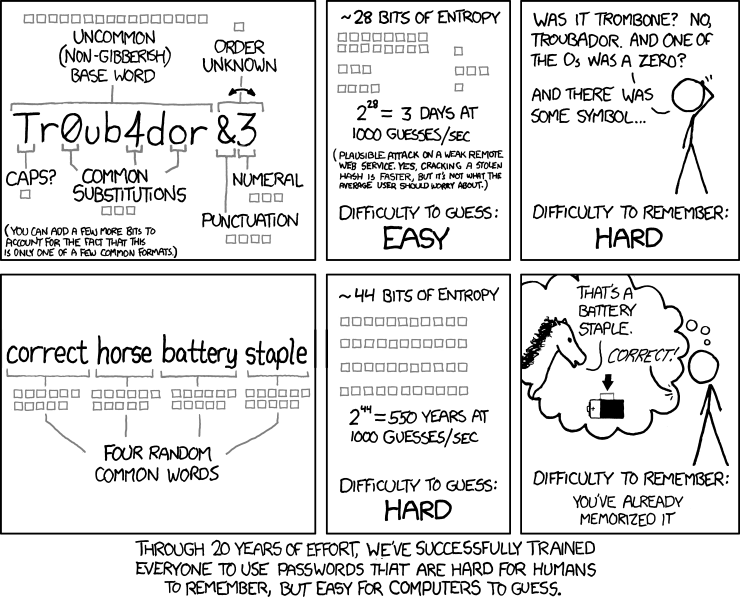

Apart from choosing a good hashing algorithm you should also force your users to choose a password which is built up of at least eight, random characters. Unfortunately people aren’t designed to remember and generate random sequences of characters. This is why we force our users to make passwords which contain numbers, letters, signs and at least one capital letter. But how does this help in regard to password hashing?

To attack hashed passwords there are different strategies:

- Dictionary Attacks

- Bruteforce

- Rainbow Tables (generate everything upfront in a database and do a look up for each hash)

With a dictionary attack you will try to use word lists, these can consist of mostly used passwords, words, names, years, etc. For each word you will run the hashing algorithm and see if the generated hash is the same as the hash in the database. If this is the case then you know that the word from which you derived the hash is the password.

With a bruteforce attack you will try all possible combinations of characters. When using passwords of at least eight characters long, only using the ASCII characters set, there are 128^8 possibilities of passwords.

To show the importance of the length of a password:

These days, using a single, modern GPU, you can process about 10.323.000.000 passwords per second when bruteforcing plain MD-5. With this speed, when using a password of eight random characters, it will take about eighty days to generate every single possibility. This single GPU only costs about 500 USD (AMD Radeon 6990). People have actually constructed clusters which contain 25 of these cards, optimized it and managed to generate 350 billion passwords per second. This means they can generate all possible passwords of eight random characters long in less than two days.

Now when you add one character to the password, the possibilities will be 128^9. With previous calculation of 350 billion it will now take 305 days. 10 characters -> 106 years. This seems long, but we need to take into account Moore’s law:

Moore’s law is the observation that, over the history of computing hardware, the number of transistors on integrated circuits doubles approximately every two years. The period often quoted as “18 months” is due to Intel executive David House, who predicted that period for a doubling in chip performance (being a combination of the effect of more transistors and their being faster).

Computers have become faster and faster over the years, which is something we need to take into account. From a cryptographical point of view, 106 years is still a short period. We want infinity (something which will take several hundred-thousand to millions of years).

Hashing Passwords

Why do we hash passwords?

We hash passwords because in the event an attacker gets read access to our database, we do not want him to retrieve the passwords plain text. Remember often we store usernames, email addresses and other personal information in our database. Security rule #1 dictates that users need to be protected from themselves. We can make them aware of the risks, we can tell them not to re-use passwords, but we all know that in the end there will still be people who use the same password for their Facebook, Gmail, Linkedin and corporate email. What you do not want is that when the attacker gets his hand on your database, he immediately has access to all the above accounts (usernames/email addresses will be the same).

Hashing passwords is to prevent this from happening, when the attacker gets his hands on your database, you want to make it as painful as possible to retrieve those passwords using a brute-force attack. Hashing passwords will not make your site any more secure, but it will perform damage containment in the event of a breach.

Properties

Now that you have a small overview of hashing algorithms let’s dive into password hashing. Password hashing requires the following properties:

- Have a unique salt per password (salt may only be used once across the database containing all password hashes) to prevent a bruteforce attack of compromising the data in one run

- Fast on software (executing the function once must be relatively fast)

- Slow on hardware (executing the function concurrently should be slow, this to prevent brute forcing on distributed systems)

Salt and Pepper

A salt is a non-secret, unique value in the database which is appended (depending on the used algorithm) to the password before it gets hashed. Note that the only requirement of a salt should be that it is unique in the database, which means that random generation does not need to be cryptographically random. A salt is used to prevent Rainbow Table lookups (an attack where you calculate a table of hashes for passwords).

It should be noted that you should never use any user supplied information as salt. This to prevent that two databases (of another application for example) would return the same hash for a given password+salt. Also when bruteforcing, you can’t generate all possibilities in one run, you will need to run the bruteforce for each password taking the salt into account. This means that instead of being able to break all passwords in 2 days (as mentioned in previous paragraph), you will need to run the bruteforce process for each password taking into account the specific salt for that password. So this means it will take 2 days for every hash (1 day if you take probability into account) rather than 2 days for the complete database. It will also prevent people from using Rainbow Tables, as the table would need to be generated, per password, based on the salt. This is why salts are so important and why they should be unique for a database!

A pepper is a secret value (a key) which is used to turn the hash into a HMAC (The pepper does not necessarily mean HMAC. The pepper is best described as a secret key which turns the hash function into a MAC. There are good and bad ways to do a MAC; one good way is HMAC.). A HMAC is like a hashing function, but cannot be reproduced without knowing the key. This could increase security if an attacker would only have access to the database and not to the place where the key is actually stored. The problem is that often in data compromises an attacker will at least have access to the hard disk and in some cases even the memory. If the attacker can obtain the key, then the pepper does not add any security to the hashing algorithm.

Speed

A password hashing algorithm should be slow to prevent bruteforce attacks, pereferably it should have features which actually decrease the feasibility of a distributed brute force attack on the hashes. This immediately throws out the following hashing algorithms:

- MD-5

- SHA-1

- SHA-2

- SHA-3

In their normal form all of the above hashing algorithms are actually unsuited for password hashing. They are all incredibly fast. People often think MD-5 is flawed for password hashing because of collision attacks, but this is not true, it’s because it’s an incredibly fast hashing algorithm. The same is true for SHA-3. I’ve seen people use SHA-3 for password hashing. They assume because it’s supposed to become the new standard that it’s suited for password hashing. Keccak (the name of the algorithm) was, by design, meant to be a fast hashing algorithm. This means it’s completely unsuited for password hashing in any form. So please note that not all hashing algorithms are suited for password hashing.

We can also add:

- MD-5, SHA-1, SHA-256 (and SHA-224) use 32-bit operations, which are very fast on x86 CPU but also on ARM and, crucially, on GPU as they exist today.

- SHA-512 (and SHA-384) use 64-bit arithmetic operations which make life harder for GPU, but also for 32-bit architectures (ARM in particular).

- SHA-3 (Keccak) uses only boolean operations, which makes it fast everywhere but also quite faster on FPGA (no carry propagation).

So what to do now? Well you still have a few options open if you want to use the hashes from the Secure Hashing Algorithm suite. But they need to be used in a PBKDF2 implementation (and even then not every one of them is suited), which we will discuss below.

Hashing Passwords: algorithms

There are currently three algorithms which are safe to use:

- PBKDF2

- bcrypt

- scrypt

PBKDF2

PBKDF2 is an algorithm which is used to derive keys. It wasn’t intended for password hashing, but due to it’s property of being slow, it lends itself quite well for this purpose. The resulting derived key (HMAC) can actually be used to securely store passwords. It’s not the ideal function for password hashing, but it’s easy to implement and it’s built upon SHA-1 or SHA-2 hashing algorithms (any HMAC will do, but these are the most common used ones and the most secure ones). Wait, didn’t you say SHA-1 and SHA-2 were bad to use when hashing passwords? Yes indeed, that’s why we use PBKDF2 to make the hashing a lot slower. You still will need to choose your hashing algorithm carefully, PBKDF2+Keccak is a substantially worse choice than PBKDF2+SHA-256, which is already somewhat worse than PBKDF2+SHA-512 if your server is a 64-bit PC.

To derive a key PBKDF2 does the following:

DK = PBKDF2(PRF, Password, Salt, c, dkLen)Where DK is the derived key, PRF is the preferred HMAC function (this can be a SHA-1/2 HMAC, the password is used as a key for the HMAC and the salt as text), c is the amount of iterations and dkLen is the length of the derived key. A salt should, by definition of the standard, be at least 64-bits of length and the minimum amount of iterations should be 1024. What the algorithm will do is SHA-1-HMAC(password+salt), and repeat the calculation 1024 times on the result. This means the hashing of a password will be 1024 times slower. Still this does not actually offer a lot of protection when bruteforcing on distributed systems or GPU (Graphic Processing Unit).

There’s also a caveat when the password exceeds 64 bytes, the password will be shortened by applying a hash to it by the PBKDF2 algorithm so it does not exceed the block size. For instance when using HMAC-SHA-1 a password longer than 64 bytes will be reduced to SHA-1(password), which is 20 bytes in size. This means passwords longer than 64 bytes do not provide additional security when it comes to breaking the key used to make the HMAC, but may even reduce security as the length of the key will be reduced (note that even when reduced to 20 bytes, currently our great-great-great-great-great-great-great-great-great-great grand children will be long dead before the key is brute forced).

bcrypt

bcrypt is currently the defacto secure standard for password hashing. It’s derived from the Blowfish block cipher which, to generate the hash, uses look up tables which are initiated in memory. This means a certain amount of memory space needs to be used before a hash can be generated. This can be done on CPU, but when using the power of GPU it will become a lot more cumbersome due to memory restrictions. Bcrypt has been around for 14 years, based on a cipher which has been around for over 20 years. It’s been well vetted and tested and hence considered the standard for password hashing.

There is actually one weakness, FPGA processing units. When bcrypt was originally developed it’s main threat was custom ASICs specifically built to attack hash functions. These days those ASICs would be GPUs (password bruteforcing can actually still run on GPU, but not in full parallelism) which are cheap to purchase and are ideal for multithreaded processes such as password bruteforcing.

FPGAs (Field Programmable Gate Arrays) are similar to GPUs but the memory management is very different. On these chips bruteforcing bcrypt can be done more efficiently than on GPUs, but if you have a long enough password it will still be unfeasable.

scrypt

For password hashing, the current fashion is to move the problem away to another level; instead of doing a lot of hash function invocations, concentrate on an operation which is hard for anything else than a PC, e.g. random memory accesses. That’s what scrypt is about. Scrypt is another hashing algorithm which has the same properties as bcrypt, except that when you increase rounds, it exponentially increases calculation time and memory space required to generate the hash. Scrypt was created as response to evolving attacks on bcrypt and is completely unfeasable when using FPGAs or GPUs due to memory constraints. Scrypt requires the storage of a series of intermediate state data “snapshots”, which are used in further derivation operations. These snapshots, stored in memory, grow exponentially compared when rounds increase. So adding a round, will make it exponentially harder to brute force the password. Scrypt is still relatively new compared to bcrypt and has only been around for a couple of years, which makes it less vetted than bcrypt.

Conclusion and Acknowledgments

Passwords should be hashed with either PBKDF2, bcrypt or scrypt, MD-5 and SHA-3 should never be used for password hashing and SHA-1/2(password+salt) are a big no-no as well. Currently the most vetted hashing algorithm providing most security is bcrypt. PBKDF2 isn’t bad either, but if you can use bcrypt you should. Scrypt, while still considered very secure, hasn’t been around for a long time, so it doesn’t get recommended a lot, but it seems it will become the successor of bcrypt, once it has been around a bit longer. Note that while there are some caveats and password bruteforcing strategies for PBKDF2 and bcrypt, they are still considered unfeasable for strong passwords (passwords longer than 8 characters, containing numbers, letters, signs and at least one capital letter).

I would also like to thank Thomas Pornin for supplying so much insights, reviewing my cryptography assumptions and for suggesting amendments. I would also like to thank CodesInChaos for answering my questions regarding PBKDF2 key generation.

The part about PBKDF2 is a bit confusing between usage of SHA-1 and usage of an arbitrary PRF. Also it is not clear in what manner PBKDF-2 with SHA-3 would be worse than the SHA-2 variants – easier to brute-force?

SHA-3 is fast on hardware, it’s A LOT faster than SHA-2, that’s why it’s worse than SHA-2.

The suitability of a hash-function depends on how fast the function is in software compared to how expensive it is to implement in hardware. both SHA-2 and SHA-3 are relatively cheap in hardware, but SHA-3 is probably even a bit more hardware friendly.

The quality of your software implementation is pretty important for this ratio as well. For example .net’s implementation(

Rfc2898DeriveBytes) is horribly slow compared to a good implementation. With a good implementation you can choose a higher iteration count (and thus higher security) at the same performance cost.Small correction: saying an 8-character random password has 128^8 possibilities is not completely accurate. The first 32 (and the last 1) ASCII characters are control characters and are not considered “printable”. For a lot of them, the browser will treat them specially (tab, delete, and CR/LF come to mind). It’s very unlikely that they would be accepted by a login form. 95 is a better base to use (an 8-character random password being 95^8).

As usual, a blog article about security frightens me! It’s really about time that operating systems implement two way authentication via phone.

It’s the only thing that makes me feel safe for my email account, and I’d like to feel the same way about my databases and user accounts in other places.